To whom does history belong: to those who live it or to those who record it?

For too long, we have accepted that history is told from hegemonic academic institutions, dominated by wealthy Caucasian individuals, who say who has access to historical knowledge.

As the popular saying goes, “History is written by the victors,” or rather, the oppressors.

But what happens with the voices that are not heard? For example, the stories of the artisans who keep alive a rich cultural tradition with their hands and creativity.

“Herencia means Heritage” is a project that uses community engaged research and creative storytelling to highlight the crucial role of the artisans of traditional Talavera pottery in Mexico.

This project does not focus on materials, institutions or technical regulations that, although they seem to protect the cultural legacy, have served as tools of monopolization.

These regulations exclude the main characters: the artisans, preventing them from creating their own workshops, since the certification of origin is reserved for those who can pay it.

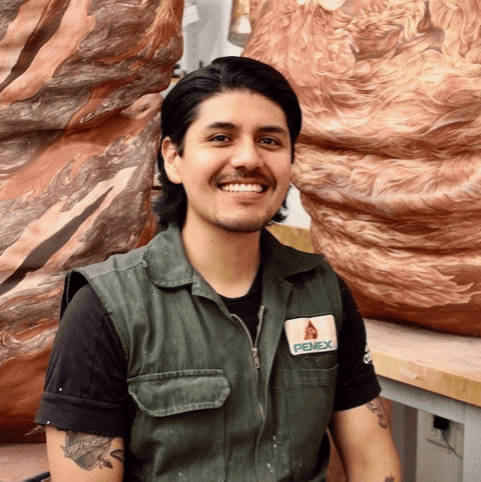

“Herencia means Heritage” aims to break down these barriers and return the spotlight to those who deserve it. Through creative research, I am seeking to bring light to the experiences, challenges and triumphs of Talavera artisans, recognizing the priceless value of their work.

At the end of the day, history should belong to those who live it. Today, we want to rewrite that history together, giving voice to the artisans who keep the tradition of Talavera ceramics alive. It’s time to listen, learn and value the stories that really matter.